Table of Contents

- Note

- The example is based on Litvinenko, Sun, Genton and Keyes, Likelihood Approximation With H-Matrices For Large Spatial Datasets (arXiv:1709.04419).

Problem Description

The number of measurements that must be processed for statistical modeling in environmental applications is usually very large, and these measurements may be located irregularly across a given geographical region. This makes the computing process expensive and the data difficult to manage.

These data are frequently modeled as a realization from a stationary Gaussian spatial random field. Specifically, we let \(\mathbf{Z}=\{Z(\mathbf{s}_1),\ldots,Z(\mathbf{s}_n)\}^\top\), where \(Z(\mathbf{s})\) is a Gaussian random field indexed by a spatial location \(\mathbf{s} \in \Bbb{R}^d\), \(d\geq 1\). Then, we assume that \(\mathbf{Z}\) has mean zero and a stationary parametric covariance function

\[ C(\mathbf{h};{\boldsymbol \theta})=\hbox{cov}\{Z(\mathbf{s}),Z(\mathbf{s}+\mathbf{h})\}, \]

where \(\mathbf{h}\in\Bbb{R}^d\) is a spatial lag vector and \({\boldsymbol \theta}\in\Bbb{R}^q\) is the unknown parameter vector of interest. Statistical inferences about \({\boldsymbol \theta}\) are often based on the joint Gaussian log-likelihood function:

\[ \mathcal{L}(\mathbf{\theta})=-\frac{n}{2}\log(2\pi) - \frac{1}{2}\log \vert \mathbf{C}(\mathbf{\theta}) \vert-\frac{1}{2}\mathbf{Z}^\top \mathbf{C}(\mathbf{\theta})^{-1}\mathbf{Z}, \]

where the covariance matrix \(\mathbf{C}(\mathbf{\theta})\) has entries \(C(\mathbf{s}_i-\mathbf{s}_j;{\boldsymbol \theta})\), \(i,j=1,\ldots,n\). The maximum likelihood estimator of \({\boldsymbol \theta}\) is the value \(\widehat{\boldsymbol \theta}\) that maximizes \(\mathcal{L}\). When the sample size \(n\) is large, the evaluation of \(\mathcal{L}\) becomes challenging, due to the computation of the inverse and log-determinant of the \(n\)-by- \(n\) covariance matrix \(\mathbf{C}(\mathbf{\theta})\). Indeed, this requires \({\cal O}(n^2)\) memory and \({\cal O}(n^3)\) computational steps. Hence, scalable methods that can process larger sample sizes are needed which in this example are provided by \(\mathcal{H}\)-matrices and their arithmetic.

Matérn Covariance Function

Among the many covariance models available, the Matérn family has gained widespread interest in recent years. The Matérn form of spatial correlations was introduced into statistics as a flexible parametric class, with one parameter determining the smoothness of the underlying spatial random field.

The Matérn covariance depends only on the distance \(h:=\Vert \mathbf{s}-\mathbf{s}'\Vert \), where \(\mathbf{s}\) and \(\mathbf{s}'\) are any two spatial locations. The Matérn class of covariance functions is defined as

\[ C(h;{\boldsymbol\theta})=\frac{\sigma^2}{2^{\nu-1}\Gamma(\nu)}\left(\frac{h}{\ell}\right)^\nu K_\nu\left(\frac{h}{\ell}\right), \]

with \({\boldsymbol\theta}=(\sigma^2,\ell,\nu)^\top\); where \(\sigma^2\) is the variance; \(\nu>0\) controls the smoothness of the random field, with larger values of \(\nu\) corresponding to smoother fields; and \(\ell>0\) is a spatial range parameter that measures how quickly the correlation of the random field decays with distance, with larger \(\ell\) corresponding to a faster decay (keeping \(\nu\) fixed). Here \({\mathcal{K}}_\nu\) denotes the modified Bessel function of the second kind of order \(\nu\). When \(\nu=1/2\), the Matérn covariance function reduces to the exponential covariance model and describes a rough field. The value \(\nu=\infty\) corresponds to a Gaussian covariance model that describes a very smooth, infinitely differentiable field. Random fields with a Matérn covariance function are \(\lfloor \nu-1 \rfloor\) times mean square differentiable.

Hierarchical Approximation of Gaussian Log-Likelihood

We use the \(\mathcal{H}\)-matrix technique to approximate the Gaussian likelihood function. The \(\mathcal{H}\)-matrix approximation of the exact log-likelihood \(\mathcal{L}({\boldsymbol\theta})\) is defined by \(\tilde{\mathcal{L}}({\boldsymbol\theta})\):

\[ \tilde{\mathcal{L}}({\boldsymbol\theta})=-\frac{n}{2}\log(2\pi) - \sum_{i=1}^n\log \{\tilde{\mathbf{L}}_{ii}({\boldsymbol\theta})\}-\frac{1}{2}\mathbf{v}({\boldsymbol\theta})^\top \mathbf{v}({\boldsymbol\theta}), \]

where \(\tilde{\mathbf{L}}({\boldsymbol\theta})\) is the \(\mathcal{H}\)-matrix approximation of the Cholesky factor \(\mathbf{L}({\boldsymbol\theta})\) in \(\mathbf{C}({\boldsymbol\theta})=\mathbf{L}({\boldsymbol\theta})\mathbf{L}({\boldsymbol\theta})^\top\), and \(\mathbf{v}({\boldsymbol\theta}) \) is the solution of the linear system \(\tilde{\mathbf{L}}({\boldsymbol\theta})\mathbf{v}({\boldsymbol\theta})=\mathbf{Z}\).

Since the representation of the dense \(\boldsymbol C(\theta)\) requires quadratic storage complexity, approximation is already applied here, e.g., \(\tilde C(\theta)\) is computed instead as an \(\mathcal{H}\)-matrix approximation of \(\boldsymbol C(\theta)\). With this, we obtain \(\tilde{\mathbf{L}}({\boldsymbol\theta})\) as the Cholesky factor in the factorization

\[ \mathbf{\tilde C}({\boldsymbol\theta})=\mathbf{\tilde L}({\boldsymbol\theta})\mathbf{\tilde L}({\boldsymbol\theta})^\top \]

For the \(\mathcal{H}\)-matrix approximation \(\tilde{\mathcal{L}}({\boldsymbol\theta})\) we can either choose a constant rank \(k\), e.g., all low-rank subblocks of the matrix are of rank \(k\), or a fixed accuracy \(\varepsilon > 0\). In the latter case, the rank per subblock is adaptively chosen (see also Accuracy Control).

Of further interest is the value \(\hat \theta\) which maximizes \(\mathcal{L}(\theta)\), which is a three dimensional optimization problem for \((\sigma^2,\ell,\nu)\).

Implementation

The program starts with the usual inclusion of the corresponding HLIBpro header files and the initialisation of HLIBpro:

In the next step, the coordinate and the rhs data is read from a file:

The function read_data implements the most part. For the actual data file, a text format is assumed in which in the first line the number of coordinates is given. Afterwards, each line contains the coordinate index (counting starts from zero) followed by two spatial coordinates and the value of \(\boldsymbol Z\) is given.

As an example, the following data defines four values of \(\boldsymbol Z\) at the eight points of a unit cube:

The function then looks like:

Construction of Covariance Matrix

Before the \(\mathcal{H}\)-matrix can be constructed, the block layout of the matrix has to be defined. For this, the coordinates are used to partition the index set. Together with an admissibility condition, the partitioning of the \(\mathcal{H}\)-matrix is defined:

For the \(\mathcal{H}\)-matrix, the matrix coefficients have to be computed. HLIBpro provides the Matern coefficient function in the form of TMaternCovCoeffFn, which expects a template argument to define the type in which coordinates are provided. In this example, this corresponds to T2DPoint for two dimensional coordinates.

Furthermore, the parameters \(\sigma, \ell\) and \(\nu\) need to be defined. For now, we assume some fixed values for these (see below for maximization of covariance function):

With this, the coefficient function for the Matern kernel can be defined:

The permutation function converts the numbering from the numberung due to the coordinate indices to the internal numbering of the \(\mathcal{H}\)-matrix.

Equipped with matrix coefficients, we finally can construct the \(\mathcal{H}\)-matrix approximation to \(\boldsymbol C(\theta)\):

Computation of Log-Determinant

First, the cholesky factorization needs to be computed of C:

At this point, C_inv is a linear operator enabling evaluation of \(C^{-1}\) while C_fac holds the entries of the Cholesky factor.

The latter are now of interest as we need to compute the log-determinant. Since \(\tilde L\) is of triangular shape and after switching multiplication with logarithm, a summation of the logarithm of its diagonal elements yields the result:

Computation of Gaussian Log-Likelihood

Finally, we are able to compute the Gaussian log-likelihood \(\mathcal{L}(\theta)\). Only missing is the vector \(v(\theta)\) as the solution of the rhs \(Z\) and the covariance matrix \(C\).

First, the right hand side is set up with the previously read data:

The last step is necessary for the vector to have the same ordering as the \(\mathcal{H}\)-matrix.

Solving the equation system is done iteratively using the Cholesky factorization as a preconditioner.

The stop criterion was chosen to have at most 250 iterations and to reduce the residual by a factor of \(10^{16}\). The CG solver is used since \(C\) is positive definite.

After iteration sol holds the solution.

The log-likelihood LL is then computed following the above formula as

- Remarks

- The formula for

LLfollows the definition of \(\mathcal{L}(\mathbf{\theta})\) with a correctly computed log-determinant, whereas in \(\tilde{\mathcal{L}}({\boldsymbol\theta})\) already the factor \(2\) from the two Cholesky factors is applied cancelling out \(0.5\).

With the finalization of HLIBpro

the program for computing the log-likelihood for a fixed parameter set \(\theta\) is complete.

Example Data

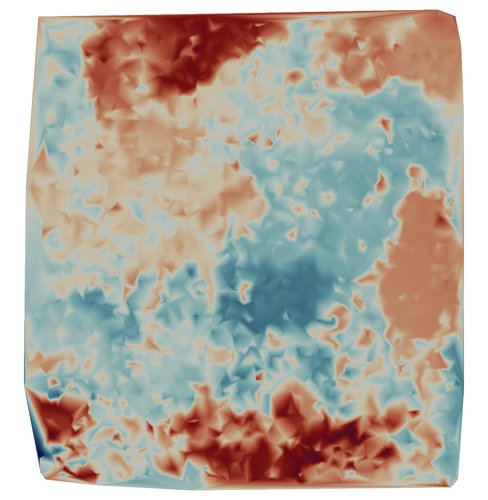

Example data for the program is provided by a soil moisture data set with 4000 data points and corresponding values of \(Z\).

|

| Example Moisture Data |

You may download the data file from hlibpro.com.

Vizualization

The data assigns a parameter to each spatial point. The program ParaView is able to visualize this point data. For this, we write the coordinates and the parameter in a CSV (comma separated) file:

In ParaView, open the CSV file and press Apply. The default options for importing CSV data work with the format of the above export function.

After importing, the data is shown as a table. This table is now converted to point data by choosing Filter > Alphabetical > Table to Points. Choose x for the X column, y for the Y column and z for the Z column. You may also activate 2D Points as the data is two dimensional (z set to zero). After pressing Apply, the points should be visible in the Render View. If not, make sure the eye symbol next to TableToPoints1 is active.

Afterwards, you may use the v values for colorization of the points. Just select in the left pane at Coloring v instead of Solid Color (you may also change the colormap).

ParaView also lets you convert the point cloud into a grid. For this, use Filters > Alphabetical > Delaunay 2D and press Apply.

Modifications

LDL factorization

Instead of using the Cholesky factorization to compute the log-determinant and the preconditioner for solving \(C(\theta) v = Z\), one could use LDL factorization which is more stable in case of rounding errors leading to negative diagonal elements during Cholesky (the covariance matrix becomes ill-conditioned with a larger problem dimension).

By default, HLIBpro computes a block-wise LDL factorization, e.g., computing the inverse of diagonal block instead of performing dense LDL. Since the diagonal elements of \(D\) are needed, this is not possible. Therefore, the point-wise version needs to be performed, which is enabled by setting the corresponding option before factorization:

The computation of the log-determinant also changes, since now only \(D\) has to be considered with \(L\) being unit diagonal matrices:

Three Dimensional Data

As the implementation of the Matern kernel is based on a template argument for the coordinates, it is easy to modify the code to support three dimensional data: just replace T2Point with T3Point. Furthermore, you have to read the third coordinate in read_data:

The Plain Program

Maximization of Log-Likelihood

Function Minimization

The GNU Scientific Library is used to implement the maximization of \(\mathcal{L}(\theta)\) by corresponding minimization functions provided by GSL and we wrap the process into a corresponding function:

The first three parameters provide the start value for the maximization on input and hold the maximal values on output of the function. The last parameter, which corresponds to the problem to maximize is discussed below.

First, the GSL data structures are intialized with the start value and step sizes for each parameter. Since the parameters are entries in an array, we use the above predefined indices to access those.

Next, the function to maximize (minimize) is defined.

Here, eval_logli implements the evaluation of the Gaussian log-likelihood for a given parameter \(\theta = (\sigma,\ell,\nu)\). The second parameter to this function is set to the problem object and cast back inside to be evaluated.

The return value of eval is negated, because GSL performs a function minimization instead of maximization.

The GSL part is continued with setting up the iterative s and starting the actual minimization loop:

Next, a single minimization step is performed and the accuracy criterion tested (tolerance \(\le 10^{-3}\)):

The individual parameters are extracted together with the value of the log-likelihood for the current value of \(\theta\):

Stop the iteration if the accuracy tolerance was achieved or the maximal number of iterations reached.

Log-Likelihood Problem

Above, the Gaussian log-likelihood problem was evaluated for a fixed parameter \(\theta\). Now, we need to evaluate this for many different values and hence, we restructure the code, which splits constant parts, e.g. coordinate managment and partitioning, and variable parts, e.g., \(\mathcal{H}\)-matrix approximation and factorization, into different functions.

The init function will hold coordinate set up, matrix partitioning and the right hand side:

- Remarks

- Since

Z_datais a local object and the default construction for a vector is only using a reference to the vector data, we have to usestd::moveto make a real copy of the vector data. Otherwise, the reference points to freed data.

The eval function will then construct an \(\mathcal{H}\)-matrix, factorize it, compute the log-determinant and solve the equation \(C(\theta) v(\theta) = Z\) for each call during the minimization procedure.

Numerical Experiments

Running the above code (full program see below) with the example data set results in the following (truncated) output:

The Plain Code

The remaining code follows the above program and consists of initialisation and finalisation of HLIBpro as well as actually calling the log-likelihood maximization procedure: